|

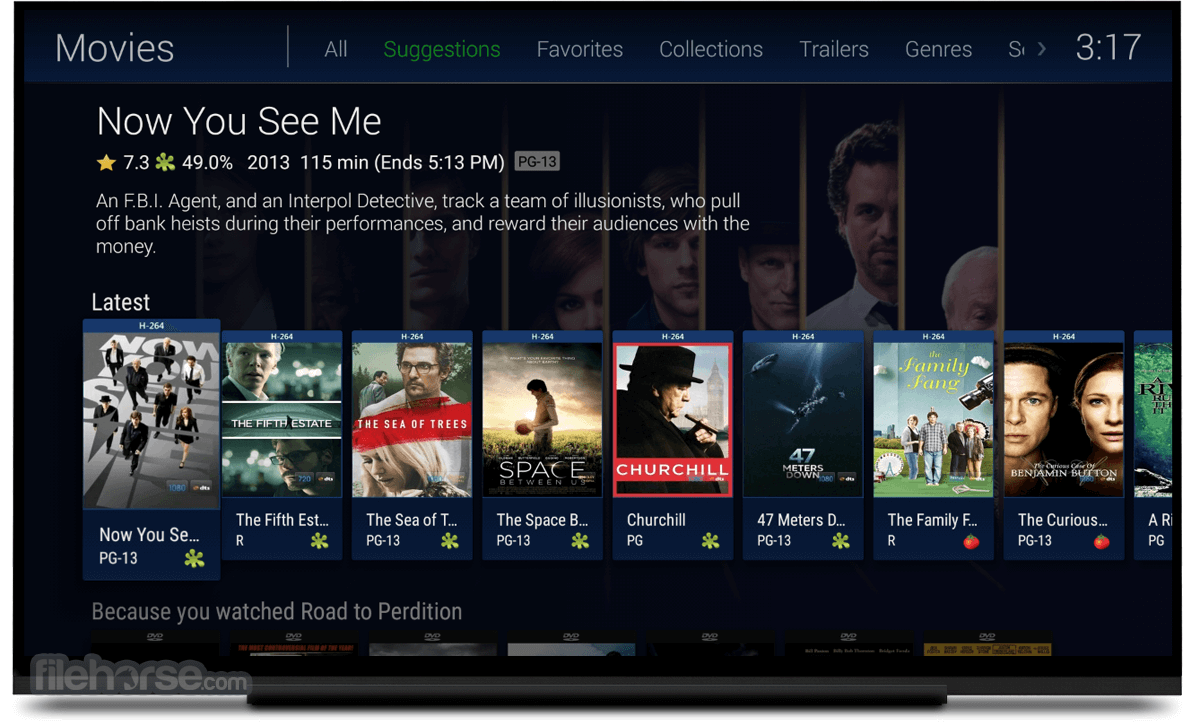

I even patched the drivers to unrestrict the number of concurrent streams the card can support for encoding/decoding and set a ram drive with 200GB of ram storage for temp transcode location. It does seem that I can do it if the source media is 264 codec at that high of a bitrate, but if it's 265 codec, my video card struggles to maintain a 1-to-1 play speed vs transcode speed. I was hoping to rely on transcoding on the fly from a 90Mpbs 4k down to 20Mbps 4k for both 265 and 264 codec. So I can't really stream any movies in original quality if their bit rate is higher than 45Mbps.

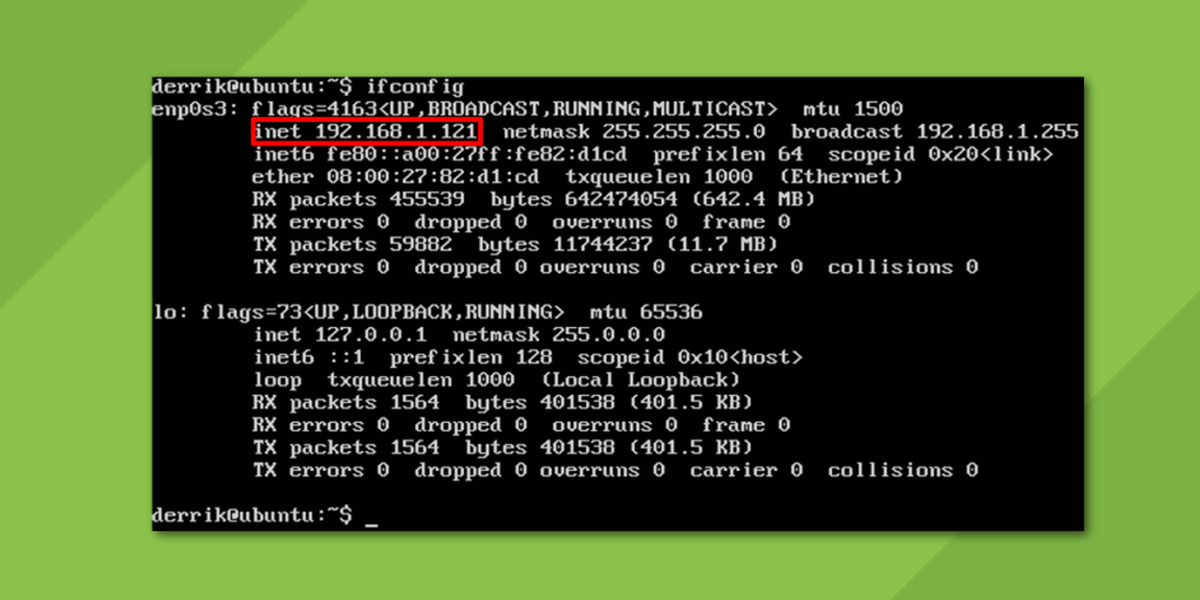

However, comcast limits their 1.2Gbps lines to peak for me is about 45Mbps upload. Also have comcast model in bridge mode so using just my own equipment. I know that my speed with Compcast can hit 1.2Gbps easily from my server (10BaseT NIC to 10BaseT Dedicate Lan router to 10BaseT WAN connection to Comcast 2.5Gps LAN. The titles he is having troubles with are 65Mbps 4k titles. However, when I share with my brother in another city, who also has 1.2Gbps internet speed, same as me, he gets throttled trying to do original quality. I also have several nvidia shield boxes in the house doing direct play 4k with over 40Mbps. It's an older 4th gen dual xeon server with 32 cores and an Geforce 1080 graphic card power transcoding. I have 1.2Gbps internet connection where the server is hosted. However, I like to edit /etc/docker/daemon.json, and force nvidia-docker to be used by default, by adding: "default-runtime": "nvidia",Īnd then restarting docker with sudo pkill -SIGHUP dockerd Launching a container with docker-nvidia GPU supportĮven with the default nvidia runtime, the magic GPU support doesn’t happen unless you launch a container with the NVIDIA_VISIBLE_DEVICES=all environment variable set.Click to expand.I have a similar problem as you do, but I do know what my problem is.

You could stop here, and manage your containers using the nvidia-docker runtime. RedHat / CentOS # If you have nvidia-docker 1.0 installed: we need to remove it and all existing GPU containersĭocker volume ls -q -f driver=nvidia-docker | xargs -r -I | xargs -r docker rm -fĬurl -s -L $distribution/nvidia-docker.repo | \ # Test nvidia-smi with the latest official CUDA imageĭocker run -d -runtime=nvidia -rm nvidia/cuda nvidia-smi

# Install nvidia-docker2 and reload the Docker daemon configuration etc/os-release echo $ID$VERSION_ID)Ĭurl -s -L $distribution/nvidia-docker.list | \

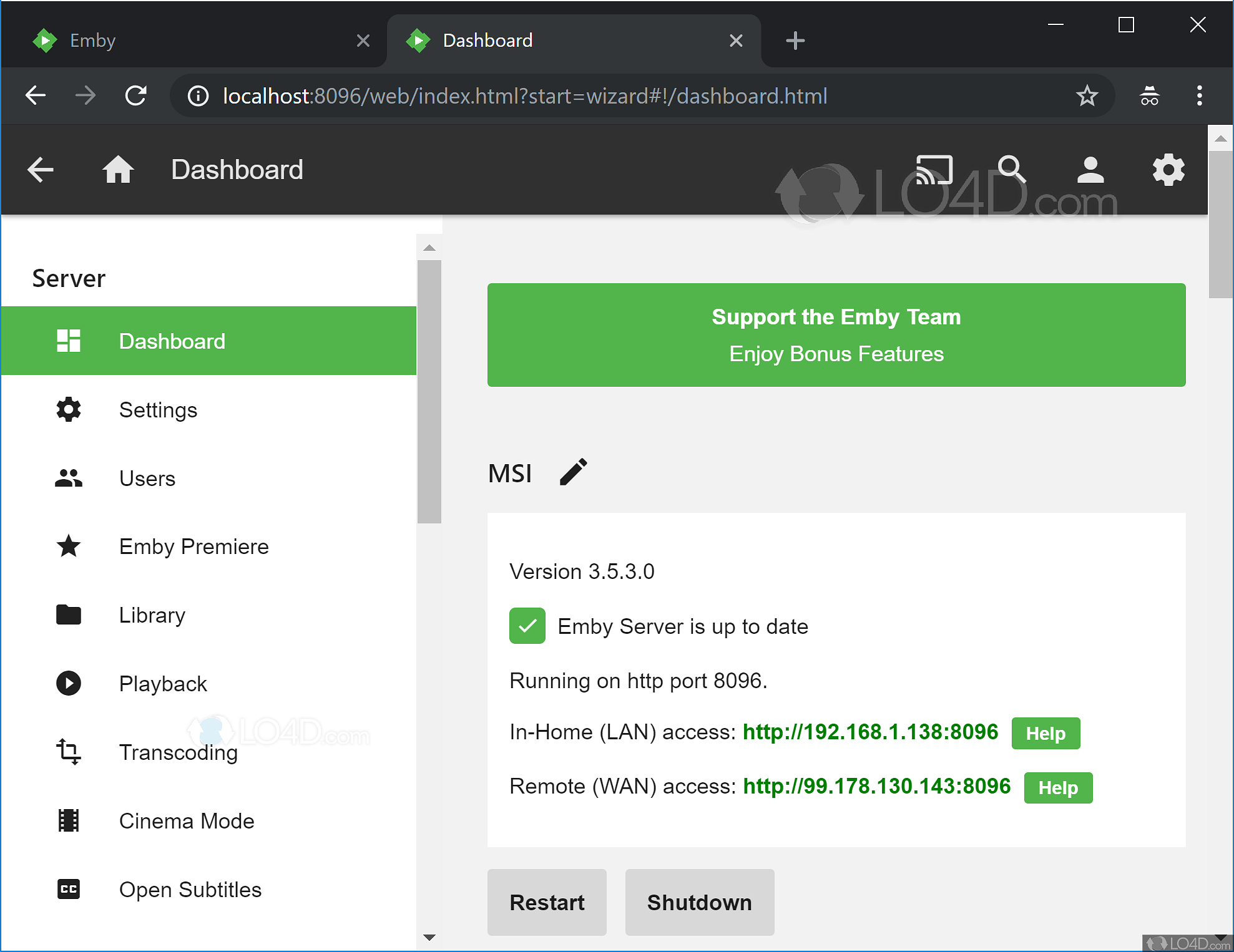

Install the latest NVIDIA drivers for your systemĭistribution=$(.If you just want the answer, follow this process: If you want to learn - read the NVIDIA Developer Blog entry. There’s a detailed introduction on the NVIDIA Developer Blog, but to summarize, nvidia-docker is a wrapper around docker, which ( when launched with the appropriate ENV variable! ) will pass the necessary devices and driver files from the docker host to the container, meaning that without any further adjustment, container images like emby/emby-server have full access to your host’s GPU(s) for transcoding! How do I enable GPU transcoding with Emby / Plex under docker? Loose access to the GPU from the host platform as soon as you launched the docker container.įortunately, if you have an NVIDIA GPU, this is all taken care of with the docker-nvidia package, maintained and supported by NVIDIA themselves.Have the ( quite large ) GPU drivers installed within the container image and kept up-to-date, and you’d.Figure out a way to pass through a GPU device to the container,.Normally, passing a GPU to a container would be a hard ask of Docker - you’d need to: GPU transcoding with Emby / Plex using docker-nvidia What’s the big deal about accessing a GPU in a docker container?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed